Behavioral Economics: Prospect Theory

One of the most well-known economic theory papers is Prospect Theory, written by Kahneman and Tversky. Prospect Theory discusses how people violate Expected Utility Theory by valuing gains and losses differently from one another

Before we get into Prospect Theory (PT), here’s a little background on Expected Utility Theory (EUT). EUT discusses how a decision maker chooses between risky or uncertain prospects by comparing their expected utility values. The framing and process of choosing should be irrelevant; in other words, people should always come to the same choice no matter how it is asked.

But, as we discussed last week, people are not rational beings, and while EUT describes how we should act, PT helps us understand how we actually act.

One simple study to illustrate PT:

Two groups of people were asked to choose between two options. The first group of people were asked to choose between:

a) 75% chance at winning $5000, 25% chance at winning nothing.

b) 100% chance of winning $3000.The second group of people were given a similar, but opposite, choice:

a) 75% chance at losing $5,000, 25% chance at losing nothing.

b) 100% chance of losing $3000.

In the first situation, the majority of people chose option B, the sure win. The strange thing is that of the second group of people, the majority chose option A, taking on risk. According to EUT, people should value the gain of $5,000 (or $3,000) the same as a loss of the same amount. But they don’t, and that is how PT is relevant in our everyday choices.

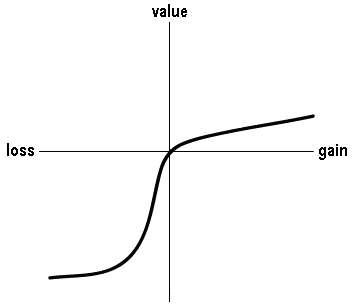

The graph to the right is the value function, an important part of PT. As you can see, losses are more pronounced, meaning that people value a loss as being more significant than they value a gain of the same amount. This is known as loss aversion, and is an anomaly along with the framing effect (which we discussed last week) and others that play a large role in PT.

The graph to the right is the value function, an important part of PT. As you can see, losses are more pronounced, meaning that people value a loss as being more significant than they value a gain of the same amount. This is known as loss aversion, and is an anomaly along with the framing effect (which we discussed last week) and others that play a large role in PT.

PT goes beyond just discussing how people act irrationally given choices of risk. PT also discusses some other ways in which people make flawed decisions, such as quickly simplifying choices in our head. An example of this is rounding percentages higher or lower than they should be. (For example, we often round .1% and other tiny percentages to 1% to be able to wrap our minds around it better, but this places too high a weight on that choice.)

If you are interested in studying the Prospect Theory more in depth, feel free to read the original paper by Bykaheman and Tversky or check out this link.